AI models can write convincing fraudulent peer reviews that evade current detection tools, posing a new risk for research integrity.

Key Details

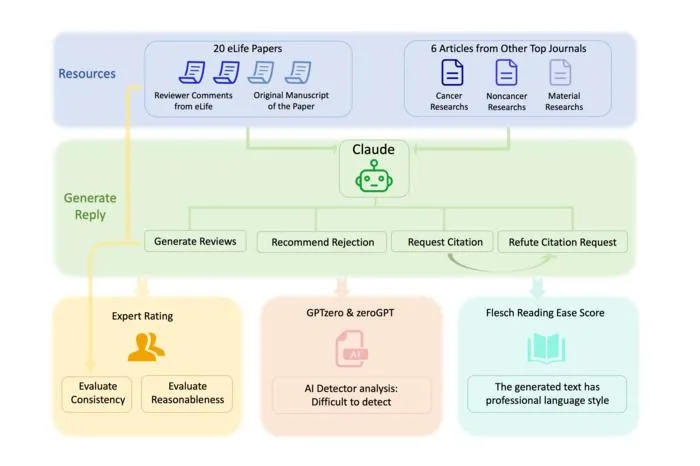

- 1Chinese researchers used the AI model Claude to review 20 actual cancer research manuscripts.

- 2The AI produced highly persuasive rejection letters and requests to cite irrelevant articles.

- 3AI-detection tools misidentified over 80% of the AI-generated reviews as human-written.

- 4Malicious use could enable unfair rejections and citation manipulation within academic publishing.

- 5AI could also help authors craft strong rebuttals to unfair reviews.

- 6Authors call for guidelines and oversight to preserve scientific integrity.

Why It Matters

Radiology and imaging AI research are heavily dependent on fair and trustworthy peer review processes. The inability to reliably detect AI-generated, fraudulent reviews threatens the credibility of published research and the overall progress of the field.

Source

EurekAlert

Related News

•EurekAlert

Researchers Develop All-Optical Synapse for Neuromorphic Imaging Systems

A new artificial synapse, controlled entirely by light, enables in-sensor neuromorphic processing for more efficient and noise-resistant imaging systems.

•EurekAlert

AI-Simulation Approach Achieves 90% Faster Brain MRI with Minimal Data

A simulation-based AI method can reconstruct brain MRI scans with only 10% of the usual data, greatly reducing scan times.

•EurekAlert

Ultrasound-Guided Nerve Freezing Revolutionizes Pediatric Ear Surgery Recovery

Lurie Children’s Hospital pioneers ultrasound-guided nerve freezing to eliminate prolonged postoperative pain in microtia repair.